R/CentOS: CentOS (Community Enterprise Operating System) is a Linux distribution that attempts to provide a free, enterprise-class Press J to jump to the feed. Press question mark to learn the rest of the keyboard shortcuts. Dec 21, 2018 This tutorial will guide you on how to perform a minimal installation of latest version of CentOS 7.0, using the binary DVD ISO image, an installation that is best suitable for developing a future customizable server platform, with no Graphical User Interface, where you.

On this page

- How To Do A CentOS 6.0 Network Installation (Over HTTP)

How To Do A CentOS 6.0 Network Installation (Over HTTP)

CentOS 6.0 is based on the upstream release EL 6.0 and includes packagesfrom all variants. All upstream repositories have been combined into one, to make it easier for end users to work with.

CentOS 6.0 Network Installation (CentOS 6.0 NetInstall) is basically installing from a very small downloaded ISO imagewhich downloads the needed files to complete the full operating system installation on-the-fly. This tutorial shows the process of installing CentOS 6.0 using the HTTP NetInstall method.This method is much faster for basic systems since you don't have to download ISO files or one huge DVD based ISOjust to get started.If you are installing many systems you may want to look into the stand-alone DVD as it will save time in the end.

Goals

This tutorial shows how you can install a fresh copy of CentOS 6.0 by using network installation method. I do not issue any guarantee that this will work for you!

Download CentOS 6.0 Net Install (NetInstall) Image

Select mirror here:

i386 version

x86_64 version

x86_64 version

Burn Image to CD and Boot Computer Using CentOS 6.0 Installation CD

Check CentOS image MD5 sum and burn image to CD with your favorite CD burner. And boot computer using CentOS Installation CD.

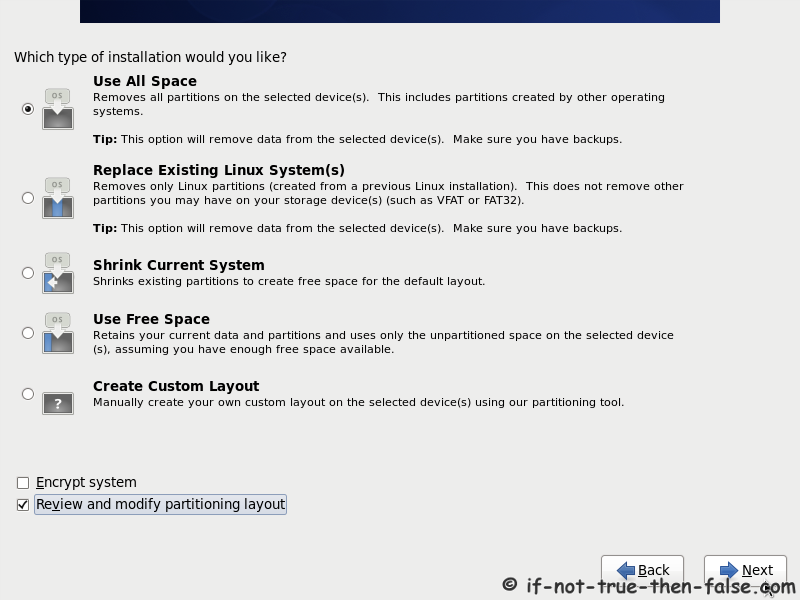

Install The Base System

Boot from your CentOS 6.0 CD. Select 'Install or Upgrade an existing system' and press enter at the boot prompt:

It can take a long time to test the installation media so we skip this test here:

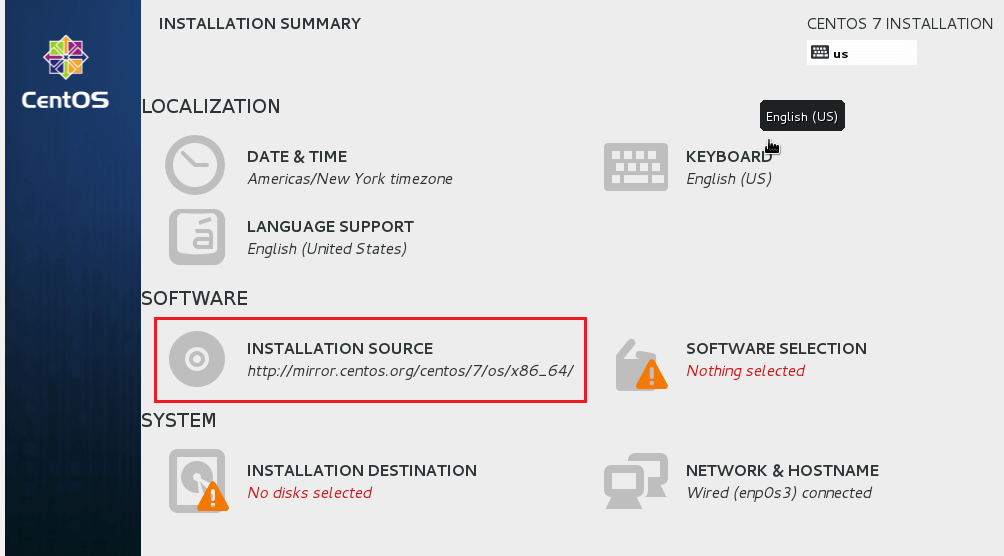

Select URL as a installation method.

If you have multiple network devices on this system, you have to specify whichnetwork device is connected to Internet.

Configure your TCP/IP setting according to your Internet connection setting.

Enter http://mirrors.kernel.org/centos/6.0/os/i386as CentOS installation URL. You can select other mirrors, which are geographicallyclose to you. If you are using a proxy server then you have to enter your proxy URL and username/password.

The process of retrieving the image files starts now. So be patient!

Still have to wait!!!

Congratulations! Welcome screen appears. Select OK:

Choose your language next:

Select your keyboard layout:

Choose your time zone:

Related

How To Set Up the code-server Cloud IDE Platform on CentOS 7 Tutorial

How To Install Apache Kafka on Debian 10 Tutorial

The author selected the Free and Open Source Fund to receive a donation as part of the Write for DOnations program.

Introduction

Apache Kafka is a popular distributed message broker designed to efficiently handle large volumes of real-time data. A Kafka cluster is not only highly scalable and fault-tolerant, but it also has a much higher throughput compared to other message brokers such as ActiveMQ and RabbitMQ. Though it is generally used as a publish/subscribe messaging system, a lot of organizations also use it for log aggregation because it offers persistent storage for published messages.

A publish/subscribe messaging system allows one or more producers to publish messages without considering the number of consumers or how they will process the messages. Subscribed clients are notified automatically about updates and the creation of new messages. This system is more efficient and scalable than systems where clients poll periodically to determine if new messages are available.

In this tutorial, you will install and use Apache Kafka 2.1.1 on CentOS 7.

Prerequisites

To follow along, you will need:

- One CentOS 7 server and a non-root user with sudo privileges. Follow the steps specified in this guide if you do not have a non-root user set up.

- At least 4GB of RAM on the server. Installations without this amount of RAM may cause the Kafka service to fail, with the Java virtual machine (JVM) throwing an “Out Of Memory” exception during startup.

- OpenJDK 8 installed on your server. To install this version, follow these instructions on installing specific versions of OpenJDK. Kafka is written in Java, so it requires a JVM; however, its startup shell script has a version detection bug that causes it to fail to start with JVM versions above 8.

Step 1 — Creating a User for Kafka

Since Kafka can handle requests over a network, you should create a dedicated user for it. This minimizes damage to your CentOS machine should the Kafka server be compromised. We will create a dedicated kafka user in this step, but you should create a different non-root user to perform other tasks on this server once you have finished setting up Kafka.

Logged in as your non-root sudo user, create a user called kafka with the

useradd command:The

-m flag ensures that a home directory will be created for the user. This home directory, /home/kafka, will act as our workspace directory for executing commands in the sections below.Set the password using

passwd:Add the kafka user to the

wheel group with the adduser command, so that it has the privileges required to install Kafka’s dependencies:Your kafka user is now ready. Log into this account using

su:Now that we’ve created the Kafka-specific user, we can move on to downloading and extracting the Kafka binaries.

Step 2 — Downloading and Extracting the Kafka Binaries

Let’s download and extract the Kafka binaries into dedicated folders in our kafka user’s home directory.

To start, create a directory in

/home/kafka called Downloads to store your downloads:Use

curl to download the Kafka binaries:Create a directory called

kafka and change to this directory. This will be the base directory of the Kafka installation:Extract the archive you downloaded using the

tar command:We specify the

--strip 1 flag to ensure that the archive’s contents are extracted in ~/kafka/ itself and not in another directory (such as ~/kafka/kafka_2.11-2.1.1/) inside of it.Now that we’ve downloaded and extracted the binaries successfully, we can move on configuring to Kafka to allow for topic deletion.

Step 3 — Configuring the Kafka Server

Kafka’s default behavior will not allow us to delete a topic, the category, group, or feed name to which messages can be published. To modify this, let’s edit the configuration file.

Kafka’s configuration options are specified in

server.properties. Open this file with vi or your favorite editor:Let’s add a setting that will allow us to delete Kafka topics. Press

i to insert text, and add the following to the bottom of the file:When you are finished, press

ESC to exit insert mode and :wq to write the changes to the file and quit. Now that we’ve configured Kafka, we can move on to creating systemd unit files for running and enabling it on startup. Step 4 — Creating Systemd Unit Files and Starting the Kafka Server

In this section, we will create systemd unit files for the Kafka service. This will help us perform common service actions such as starting, stopping, and restarting Kafka in a manner consistent with other Linux services.

Zookeeper is a service that Kafka uses to manage its cluster state and configurations. It is commonly used in many distributed systems as an integral component. If you would like to know more about it, visit the official Zookeeper docs.

Create the unit file for

zookeeper:Enter the following unit definition into the file:

/etc/systemd/system/zookeeper.service

The

[Unit] section specifies that Zookeeper requires networking and the filesystem to be ready before it can start.The

[Service] section specifies that systemd should use the zookeeper-server-start.sh and zookeeper-server-stop.sh shell files for starting and stopping the service. It also specifies that Zookeeper should be restarted automatically if it exits abnormally.Save and close the file when you are finished editing.

Next, create the systemd service file for

kafka:Enter the following unit definition into the file:

The

[Unit] section specifies that this unit file depends on zookeeper.service. This will ensure that zookeeper gets started automatically when the kafa service starts.The

[Service] section specifies that systemd should use the kafka-server-start.sh and kafka-server-stop.sh shell files for starting and stopping the service. It also specifies that Kafka should be restarted automatically if it exits abnormally. Save and close the file when you are finished editing.

Now that the units have been defined, start Kafka with the following command:

To ensure that the server has started successfully, check the journal logs for the

kafka unit:You should see output similar to the following:

You now have a Kafka server listening on port

9092. While we have started the

kafka service, if we were to reboot our server, it would not be started automatically. To enable kafka on server boot, run:Now that we’ve started and enabled the services, let’s check the installation.

Step 5 — Testing the Installation

Let’s publish and consume a “Hello World” message to make sure the Kafka server is behaving correctly. Publishing messages in Kafka requires:

- A producer, which enables the publication of records and data to topics.

- A consumer, which reads messages and data from topics.

First, create a topic named

TutorialTopic by typing:You will see the following output:

You can create a producer from the command line using the

kafka-console-producer.sh script. It expects the Kafka server’s hostname, port, and a topic name as arguments.Publish the string

'Hello, World' to the TutorialTopic topic by typing:Next, you can create a Kafka consumer using the

kafka-console-consumer.sh script. It expects the ZooKeeper server’s hostname and port, along with a topic name as arguments. The following command consumes messages from

TutorialTopic. Note the use of the --from-beginning flag, which allows the consumption of messages that were published before the consumer was started:If there are no configuration issues, you should see

Hello, World in your terminal:The script will continue to run, waiting for more messages to be published to the topic. Feel free to open a new terminal and start a producer to publish a few more messages. You should be able to see them all in the consumer’s output.

When you are done testing, press

CTRL+C to stop the consumer script. Now that we have tested the installation, let’s move on to installing KafkaT. Step 6 — Installing KafkaT (Optional)

KafkaT is a tool from Airbnb that makes it easier for you to view details about your Kafka cluster and perform certain administrative tasks from the command line. Because it is a Ruby gem, you will need Ruby to use it. You will also need

ruby-devel and build-related packages such as make and gcc to be able to build the other gems it depends on. Install them using yum:You can now install KafkaT using the gem command:

KafkaT uses

.kafkatcfg as the configuration file to determine the installation and log directories of your Kafka server. It should also have an entry pointing KafkaT to your ZooKeeper instance. Create a new file called

.kafkatcfg: Add the following lines to specify the required information about your Kafka server and Zookeeper instance:

~/.kafkatcfg

Save and close the file when you are finished editing.

You are now ready to use KafkaT. For a start, here’s how you would use it to view details about all Kafka partitions:

You will see the following output:

You will see

TutorialTopic, as well as __consumer_offsets, an internal topic used by Kafka for storing client-related information. You can safely ignore lines starting with __consumer_offsets. To learn more about KafkaT, refer to its GitHub repository.

Step 7 — Setting Up a Multi-Node Cluster (Optional)

If you want to create a multi-broker cluster using more CentOS 7 machines, you should repeat Step 1, Step 4, and Step 5 on each of the new machines. Additionally, you should make the following changes in the

server.properties file for each:- The value of the

broker.idproperty should be changed such that it is unique throughout the cluster. This property uniquely identifies each server in the cluster and can have any string as its value. For example,'server1','server2', etc. - The value of the

zookeeper.connectproperty should be changed such that all nodes point to the same ZooKeeper instance. This property specifies the Zookeeper instance’s address and follows the<HOSTNAME/IP_ADDRESS>:<PORT>format. For example,'203.0.113.0:2181','203.0.113.1:2181'etc.

If you want to have multiple ZooKeeper instances for your cluster, the value of the

zookeeper.connect property on each node should be an identical, comma-separated string listing the IP addresses and port numbers of all the ZooKeeper instances.Step 8 — Restricting the Kafka User

Now that all of the installations are done, you can remove the kafka user’s admin privileges. Before you do so, log out and log back in as any other non-root sudo user. If you are still running the same shell session you started this tutorial with, simply type

exit.Remove the kafka user from the sudo group:

To further improve your Kafka server’s security, lock the kafka user’s password using the

passwd command. This makes sure that nobody can directly log into the server using this account: At this point, only root or a

sudo user can log in as kafka by typing in the following command:In the future, if you want to unlock it, use

passwd with the -u option:You have now successfully restricted the kafka user’s admin privileges.

Conclusion

You now have Apache Kafka running securely on your CentOS server. You can make use of it in your projects by creating Kafka producers and consumers using Kafka clients, which are available for most programming languages. To learn more about Kafka, you can also consult its documentation.